By looking at the approach and products-services of an innovative startup, we gain a better understanding of what Artificial Intelligence applied to the manufacturing industry really looks like, for what objectives it can be implemented, with what advantages, costs and (cultural) barriers to entry. Interview with Andrea Calcagni, co-founder of Neural Factory.

Artificial Intelligence is the talk of the day, all over the world and in every field, the benefits it promises and the risks it entails. In manufacturing, however, the term is often misused, leading to confusion between traditional software, advanced automation, and generative AI models. The result is that many companies struggle to understand how – and if – these technologies can be concretely applied to their machines, plants and production processes to introduce new levels of operational efficiency, reduce machine downtime and make an increasingly scarce technical expertise available. We talked about it with Andrea Calcagni, with a very pragmatic approach, based on real cases.

Let’s open with your startup: what is Neural Factory and what does it do?

Neural Factory develops Generative Artificial Intelligence solutions applied to the technical and operational data of manufacturing companies. The goal is to transform an often fragmented and

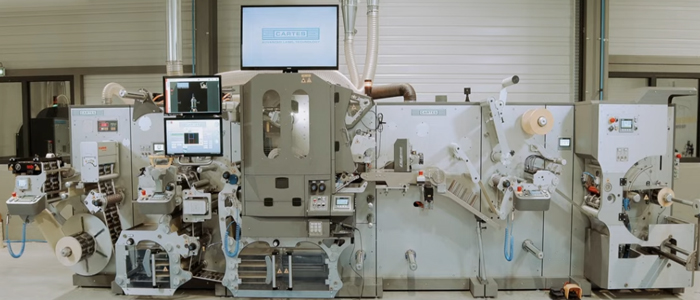

unstructured business knowledge into digital tools that can support technicians, production operators, technical offices and operations departments. We work on very heterogeneous sources: machine manuals, electrical and hydraulic diagrams, PLC code (e.g. SCL), production database, history of technical assistance emails, training videos and internal procedures. Everything is engineered to be queryable in natural language, but within a logic consistent with real industrial processes. We do not sell generic AI, but vertical solutions designed around customer-specific processes, including the packaging, printing and converting supply chain.

What needs do machine manufacturers and converter express?

The most pressing issue is the shortage of specialized technicians, aggravated by a poorly structured generational turnover and by an increasingly shorter permanence of new resources. A significant part of the know-how remains in the heads of senior technicians or is scattered in difficult-toconsult documentation. This increases dependence on a few key figures and downtimes.

And how do you respond

With dedicated and customized software. Our main product is an advanced troubleshooting assistant for the industrial context. The system integrates manuals, technical diagrams, PLC code, communications history and training materials, and guides the operator in fault diagnosis: checks to be performed, components involved, possible causes and follow-up checks. It can also automatically identify the correct spare part for a specific machine and serial number by navigating the associated diagrams.

Are we talking about “self-repairing” machines?

No. We are talking about advanced decision-making support for technicians and operators, not full autonomy, which is indeed a long-term perspective: current models do not yet have a real physical understanding of the world. However, the reduction in diagnosis and spare parts identification time is already so significant today that, in many cases, machine downtime tends to zero, with immediate economic benefits.

Does this only apply to failures or process issues as well?

Both, but with different approaches. For unstructured problems – procedures, adjustments, best practices – the assistant works as a digital tutor for less experienced operators, as long as the relevant knowledge is well documented. For structured problems, related to machine logic or interfaces, we can analyze PLC code and process data, reaching very high levels of precision.

Do you also work on the organization of production?

Yes. We are developing advanced data analysis modules connected to company databases to support production scheduling, analysis of actual order costs and resource availability verification. AI allows you to simulate dozens of alternative scenarios, many more than those that can be evaluated manually, to reduce job changes or maximize compatibility between orders.

But don’t traditional software already offer these functions?

Partly, yes, but they are rigid vertical tools that do not allow to connect structured and unstructured data. The novelty of generative AI is that it allows you to interrogate data and business knowledge in natural language, connecting all sources, structured and unstructured. The innovation is not in the model itself, but in the engineering of the specific knowledge of each company into these models.

What level of preparation do you find in companies?

There is awareness of problems, but less clarity about solutions. It is often necessary to start by working on technological literacy, even in structured companies, to clarify what AI can really do and overcome unrealistic expectations.

What are the main difficulties you encounter?

The collection, formatting and formalization of knowledge, hindered by incomplete, outdated or dispersed documentation. AI is not plug & play: it must be trained on each company, as if it were a very brilliant junior resource who must learn internal processes and logic.

What economic returns can be obtained?

It depends on the case, but even a 15-20% reduction in service times or machine downtime can generate significant ROI, especially on high-value systems.

And the costs? How do they measure up?

The traditional logic of “all-inclusive” license costs for a fixed service does not apply here. An AI project, especially one based on Generative AI, introduces a significant variable cost component tied to consumption. It is critical to educate entrepreneurs that this is not a one-time purchase, but a model with recurring costs, similar to a utility like electricity. These costs are primarily driven by token consumption and the required cloud computing resources for inference. If the system is not properly engineered and the usage is not strictly controlled (for example, by optimizing the retrieval process or fine-tuning models to be more efficient), the monthly bill can skyrocket. Therefore, in addition to initial setup, data formalization, and model training costs, a complete view of the Total Cost of Ownership (TCO) must also include meticulous attention to infrastructure efficiency and operational monitoring of consumption.

How will these software evolve?

Towards increasingly integrated systems connecting data, technical documentation and models, with a better understanding of physical and process issues. However, the critical factor will remain cultural adoption: continuous training and rethinking of workflows is required, it’s not simply about introducing new software.